Searching to these specialised anxious units as a product for synthetic intelligence could show just as important, if not a lot more so, than researching the human brain. Look at the brains of those people ants in your pantry. Each and every has some 250,000 neurons. Much larger bugs have nearer to one million. In my investigate at Sandia National Laboratories in Albuquerque, I research the brains of just one of these bigger bugs, the dragonfly. I and my colleagues at Sandia, a countrywide-security laboratory, hope to get edge of these insects’ specializations to style and design computing units optimized for duties like intercepting an incoming missile or pursuing an odor plume. By harnessing the pace, simplicity, and effectiveness of the dragonfly anxious procedure, we intention to style and design computers that accomplish these features quicker and at a portion of the electrical power that common units take in.

Searching to a dragonfly as a harbinger of foreseeable future laptop or computer units could seem to be counterintuitive. The developments in synthetic intelligence and equipment mastering that make news are commonly algorithms that mimic human intelligence or even surpass people’s capabilities. Neural networks can previously accomplish as well—if not better—than folks at some particular duties, these as detecting most cancers in medical scans. And the prospective of these neural networks stretches far over and above visible processing. The laptop or computer plan AlphaZero, experienced by self-engage in, is the most effective Go participant in the globe. Its sibling AI, AlphaStar, ranks among the the most effective Starcraft II gamers.

These types of feats, however, come at a price tag. Developing these innovative units needs significant quantities of processing electrical power, generally accessible only to select institutions with the quickest supercomputers and the sources to help them. And the electrical power price tag is off-placing.

Modern estimates propose that the carbon emissions ensuing from producing and training a normal-language processing algorithm are better than those people manufactured by four vehicles over their lifetimes.

It can take the dragonfly only about 50 milliseconds to start to react to a prey’s maneuver. If we assume ten ms for cells in the eye to detect and transmit data about the prey, and yet another five ms for muscle tissue to commence manufacturing drive, this leaves only 35 ms for the neural circuitry to make its calculations. Provided that it commonly can take a single neuron at the very least ten ms to combine inputs, the underlying neural network can be at the very least three layers deep.

But does an synthetic neural network seriously want to be massive and sophisticated to be practical? I imagine it doesn’t. To reap the positive aspects of neural-impressed computers in the in the vicinity of time period, we have to strike a balance involving simplicity and sophistication.

Which brings me back again to the dragonfly, an animal with a brain that could supply precisely the proper balance for selected programs.

If you have at any time encountered a dragonfly, you previously know how quickly these lovely creatures can zoom, and you have viewed their outstanding agility in the air. Probably less obvious from informal observation is their fantastic hunting capacity: Dragonflies effectively capture up to 95 per cent of the prey they go after, feeding on hundreds of mosquitoes in a working day.

The bodily prowess of the dragonfly has certainly not long gone unnoticed. For a long time, U.S. companies have experimented with utilizing dragonfly-impressed types for surveillance drones. Now it is time to turn our focus to the brain that controls this tiny hunting equipment.

Although dragonflies could not be ready to engage in strategic game titles like Go, a dragonfly does reveal a kind of method in the way it aims in advance of its prey’s recent place to intercept its meal. This can take calculations done incredibly fast—it commonly can take a dragonfly just 50 milliseconds to commence turning in reaction to a prey’s maneuver. It does this though monitoring the angle involving its head and its physique, so that it is aware of which wings to flap quicker to turn in advance of the prey. And it also tracks its have movements, for the reason that as the dragonfly turns, the prey will also look to transfer.

The product dragonfly reorients in reaction to the prey’s turning. The lesser black circle is the dragonfly’s head, held at its initial posture. The solid black line suggests the path of the dragonfly’s flight the dotted blue traces are the airplane of the product dragonfly’s eye. The purple star is the prey’s posture relative to the dragonfly, with the dotted purple line indicating the dragonfly’s line of sight.

So the dragonfly’s brain is doing a exceptional feat, presented that the time essential for a single neuron to insert up all its inputs—called its membrane time constant—exceeds ten milliseconds. If you component in time for the eye to procedure visible data and for the muscle tissue to deliver the drive essential to transfer, you will find seriously only time for three, maybe four, layers of neurons, in sequence, to insert up their inputs and pass on data

Could I establish a neural network that is effective like the dragonfly interception procedure? I also wondered about takes advantage of for these a neural-impressed interception procedure. Becoming at Sandia, I straight away regarded defense programs, these as missile defense, imagining missiles of the foreseeable future with onboard units developed to swiftly determine interception trajectories without the need of impacting a missile’s excess weight or electrical power intake. But there are civilian programs as nicely.

For instance, the algorithms that handle self-driving vehicles could possibly be designed a lot more economical, no for a longer period requiring a trunkful of computing machines. If a dragonfly-impressed procedure can accomplish the calculations to plot an interception trajectory, possibly autonomous drones could use it to

prevent collisions. And if a laptop or computer could be designed the exact same dimensions as a dragonfly brain (about six cubic millimeters), possibly insect repellent and mosquito netting will just one working day become a factor of the previous, replaced by tiny insect-zapping drones!

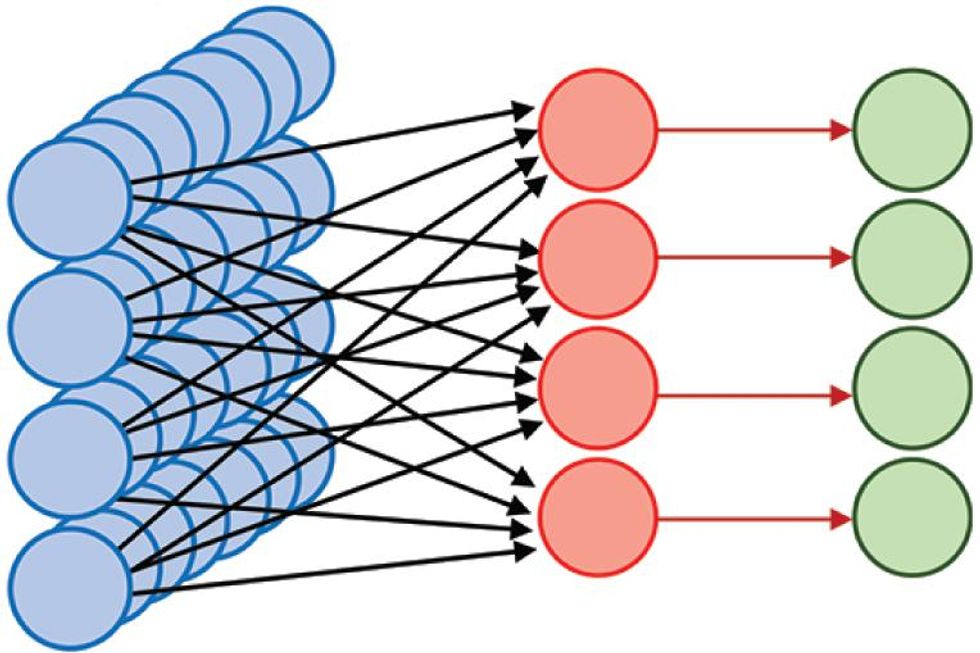

To start to solution these questions, I developed a straightforward neural network to stand in for the dragonfly’s anxious procedure and employed it to determine the turns that a dragonfly makes to capture prey. My three-layer neural network exists as a application simulation. Originally, I labored in Matlab basically for the reason that that was the coding setting I was previously utilizing. I have since ported the product to Python.

Since dragonflies have to see their prey to capture it, I started by simulating a simplified edition of the dragonfly’s eyes, capturing the minimal depth required for monitoring prey. While dragonflies have two eyes, it is generally accepted that they do not use stereoscopic depth notion to estimate distance to their prey. In my product, I did not product each eyes. Nor did I check out to match the resolution of

a dragonfly eye. In its place, the initial layer of the neural network consists of 441 neurons that stand for input from the eyes, each and every describing a particular area of the visible field—these regions are tiled to kind a 21-by-21-neuron array that covers the dragonfly’s field of perspective. As the dragonfly turns, the place of the prey’s image in the dragonfly’s field of perspective variations. The dragonfly calculates turns required to align the prey’s image with just one (or a few, if the prey is massive more than enough) of these “eye” neurons. A 2nd established of 441 neurons, also in the initial layer of the network, tells the dragonfly which eye neurons should be aligned with the prey’s image, that is, where by the prey should be within its field of perspective.

The product dragonfly engages its prey.

Processing—the calculations that get input describing the movement of an object across the field of eyesight and turn it into guidelines about which path the dragonfly wants to turn—happens involving the initial and 3rd layers of my synthetic neural network. In this 2nd layer, I employed an array of 194,481 (214) neurons, likely a lot bigger than the number of neurons employed by a dragonfly for this endeavor. I precalculated the weights of the connections involving all the neurons into the network. Although these weights could be realized with more than enough time, there is an edge to “mastering” as a result of evolution and preprogrammed neural network architectures. When it arrives out of its nymph stage as a winged grownup (technically referred to as a teneral), the dragonfly does not have a dad or mum to feed it or present it how to hunt. The dragonfly is in a susceptible condition and having employed to a new body—it would be disadvantageous to have to determine out a hunting method at the exact same time. I established the weights of the network to enable the product dragonfly to determine the suitable turns to intercept its prey from incoming visible data. What turns are those people? Nicely, if a dragonfly wishes to catch a mosquito which is crossing its route, it can not just intention at the mosquito. To borrow from what hockey participant Wayne Gretsky as soon as explained about pucks, the dragonfly has to intention for where by the mosquito is likely to be. You could possibly assume that pursuing Gretsky’s advice would need a sophisticated algorithm, but in simple fact the method is pretty straightforward: All the dragonfly wants to do is to retain a consistent angle involving its line of sight with its lunch and a fixed reference path.

Visitors who have any practical experience piloting boats will comprehend why that is. They know to get concerned when the angle involving the line of sight to yet another boat and a reference path (for instance due north) stays consistent, for the reason that they are on a collision class. Mariners have very long avoided steering these a class, recognised as parallel navigation, to prevent collisions

Translated to dragonflies, which

want to collide with their prey, the prescription is straightforward: continue to keep the line of sight to your prey consistent relative to some external reference. Nonetheless, this endeavor is not always trivial for a dragonfly as it swoops and turns, amassing its meals. The dragonfly does not have an inside gyroscope (that we know of) that will retain a consistent orientation and supply a reference regardless of how the dragonfly turns. Nor does it have a magnetic compass that will often place north. In my simplified simulation of dragonfly hunting, the dragonfly turns to align the prey’s image with a particular place on its eye, but it wants to determine what that place should be.

The 3rd and ultimate layer of my simulated neural network is the motor-command layer. The outputs of the neurons in this layer are high-degree guidelines for the dragonfly’s muscle tissue, telling the dragonfly in which path to turn. The dragonfly also takes advantage of the output of this layer to forecast the outcome of its have maneuvers on the place of the prey’s image in its field of perspective and updates that projected place accordingly. This updating permits the dragonfly to maintain the line of sight to its prey constant, relative to the external globe, as it methods.

It is doable that organic dragonflies have developed further instruments to help with the calculations essential for this prediction. For instance, dragonflies have specialised sensors that evaluate physique rotations throughout flight as nicely as head rotations relative to the body—if these sensors are quickly more than enough, the dragonfly could determine the outcome of its movements on the prey’s image specifically from the sensor outputs or use just one approach to cross-test the other. I did not take into account this chance in my simulation.

To exam this three-layer neural network, I simulated a dragonfly and its prey, shifting at the exact same pace as a result of three-dimensional room. As they do so my modeled neural-network brain “sees” the prey, calculates where by to place to continue to keep the image of the prey at a consistent angle, and sends the correct guidelines to the muscle tissue. I was ready to present that this straightforward product of a dragonfly’s brain can certainly effectively intercept other bugs, even prey traveling together curved or semi-random trajectories. The simulated dragonfly does not pretty realize the achievements fee of the organic dragonfly, but it also does not have all the rewards (for instance, remarkable flying pace) for which dragonflies are recognised.

More work is essential to identify whether this neural network is seriously incorporating all the strategies of the dragonfly’s brain. Scientists at the Howard Hughes Healthcare Institute’s Janelia Investigate Campus, in Virginia, have created tiny backpacks for dragonflies that can evaluate electrical alerts from a dragonfly’s anxious procedure though it is in flight and transmit these knowledge for analysis. The backpacks are compact more than enough not to distract the dragonfly from the hunt. In the same way, neuroscientists can also history alerts from specific neurons in the dragonfly’s brain though the insect is held motionless but designed to assume it is shifting by presenting it with the correct visible cues, creating a dragonfly-scale virtual actuality.

Knowledge from these units permits neuroscientists to validate dragonfly-brain types by comparing their exercise with exercise styles of organic neurons in an active dragonfly. Although we are unable to but specifically evaluate specific connections involving neurons in the dragonfly brain, I and my collaborators will be ready to infer whether the dragonfly’s anxious procedure is making calculations similar to those people predicted by my synthetic neural network. That will help identify whether connections in the dragonfly brain resemble my precalculated weights in the neural network. We will inevitably uncover means in which our product differs from the actual dragonfly brain. Possibly these variances will supply clues to the shortcuts that the dragonfly brain can take to pace up its calculations.

This backpack that captures alerts from electrodes inserted in a dragonfly’s brain was developed by Anthony Leonardo, a group leader at Janelia Investigate Campus.Anthony Leonardo/Janelia Investigate Campus/HHMI

Dragonflies could also instruct us how to implement “focus” on a laptop or computer. You likely know what it feels like when your brain is at full focus, fully in the zone, concentrated on just one endeavor to the place that other interruptions seem to be to fade absent. A dragonfly can furthermore emphasis its focus. Its anxious procedure turns up the quantity on responses to specific, presumably selected, targets, even when other prospective prey are visible in the exact same field of perspective. It makes feeling that as soon as a dragonfly has determined to go after a specific prey, it should improve targets only if it has unsuccessful to capture its initial selection. (In other terms, utilizing parallel navigation to catch a food is not practical if you are effortlessly distracted.)

Even if we conclusion up exploring that the dragonfly mechanisms for directing focus are less innovative than those people folks use to emphasis in the center of a crowded espresso store, it is doable that a less complicated but decreased-electrical power mechanism will show useful for following-generation algorithms and laptop or computer units by supplying economical means to discard irrelevant inputs

The rewards of researching the dragonfly brain do not conclusion with new algorithms they also can affect units style and design. Dragonfly eyes are quickly, working at the equal of 200 frames for each 2nd: That is numerous moments the pace of human eyesight. But their spatial resolution is rather bad, possibly just a hundredth of that of the human eye. Comprehension how the dragonfly hunts so efficiently, in spite of its minimal sensing capabilities, can propose means of building a lot more economical units. Making use of the missile-defense issue, the dragonfly instance implies that our antimissile units with quickly optical sensing could need less spatial resolution to strike a concentrate on.

The dragonfly just isn’t the only insect that could inform neural-impressed laptop or computer style and design currently. Monarch butterflies migrate incredibly very long distances, utilizing some innate instinct to start their journeys at the correct time of 12 months and to head in the proper path. We know that monarchs count on the posture of the solar, but navigating by the solar needs trying to keep monitor of the time of working day. If you are a butterfly heading south, you would want the solar on your left in the early morning but on your proper in the afternoon. So, to established its class, the butterfly brain have to thus go through its have circadian rhythm and incorporate that data with what it is observing.

Other bugs, like the Sahara desert ant, have to forage for rather very long distances. When a resource of sustenance is discovered, this ant does not basically retrace its methods back again to the nest, likely a circuitous route. In its place it calculates a immediate route back again. Since the place of an ant’s food stuff resource variations from working day to working day, it have to be ready to remember the route it took on its foraging journey, combining visible data with some inside evaluate of distance traveled, and then

determine its return route from those people reminiscences.

Although no person is aware of what neural circuits in the desert ant accomplish this endeavor, researchers at the Janelia Investigate Campus have determined neural circuits that enable the fruit fly to

self-orient utilizing visible landmarks. The desert ant and monarch butterfly likely use similar mechanisms. These types of neural circuits could possibly just one working day show practical in, say, reduced-electrical power drones.

And what if the effectiveness of insect-impressed computation is these that thousands and thousands of situations of these specialised elements can be run in parallel to help a lot more powerful knowledge processing or equipment mastering? Could the following AlphaZero integrate thousands and thousands of antlike foraging architectures to refine its game participating in? Possibly bugs will encourage a new generation of computers that appear quite distinct from what we have currently. A compact army of dragonfly-interception-like algorithms could be employed to handle shifting parts of an amusement park journey, ensuring that specific vehicles do not collide (a lot like pilots steering their boats) even in the midst of a complex but thrilling dance.

No just one is aware of what the following generation of computers will appear like, whether they will be part-cyborg companions or centralized sources a lot like Isaac Asimov’s Multivac. Furthermore, no just one can convey to what the most effective route to producing these platforms will entail. Although researchers created early neural networks drawing inspiration from the human brain, present day synthetic neural networks generally count on decidedly unbrainlike calculations. Finding out the calculations of specific neurons in organic neural circuits—currently only specifically doable in nonhuman systems—may have a lot more to instruct us. Insects, apparently straightforward but generally astonishing in what they can do, have a lot to contribute to the growth of following-generation computers, specifically as neuroscience investigate carries on to generate toward a deeper comprehending of how organic neural circuits work.

So following time you see an insect undertaking a thing intelligent, think about the affect on your every day lifetime if you could have the outstanding effectiveness of a compact army of tiny dragonfly, butterfly, or ant brains at your disposal. Probably computers of the foreseeable future will give new which means to the time period “hive brain,” with swarms of highly specialised but incredibly economical minuscule processors, ready to be reconfigured and deployed relying on the endeavor at hand. With the advances getting designed in neuroscience currently, this seeming fantasy could be nearer to actuality than you assume.

This report appears in the August 2021 print concern as “Classes From a Dragonfly’s Mind.”